Runway and Luma Labs Release AI Video APIs

AI video tech is about to get a lot easier for developers and users to access. Runway and Luma Labs, two pioneers in AI video, have launched APIs for their tools: Runway Gen-3 Alpha Turbo and Luma Dream Machine. Though access is limited for now, expect more models to roll out soon.

These APIs let app developers integrate video-generating AI right into their own platforms. Think about a Chrome extension that makes a short video response to a post on X instead of typing or picking a gif. The possibilities are huge.

You could even run videos through a pipeline for batch processing or create tools that specialize in AI video editing. It might cut down the hassle of making batch videos by setting up a shot list for different angles of the same content.

Runway API

Runway’s API for the Gen-3 Alpha Turbo model is now live. It lets developers easily add generative AI video to their apps. Early users include Omnicom, a leader in creative advertising, showing the API’s power for enterprise apps.

The launch is being phased, with early access for large teams. Over the next few weeks, access will open up to more users.

More details are here: https://runwayml.com/news/introducing-the-runway-api.

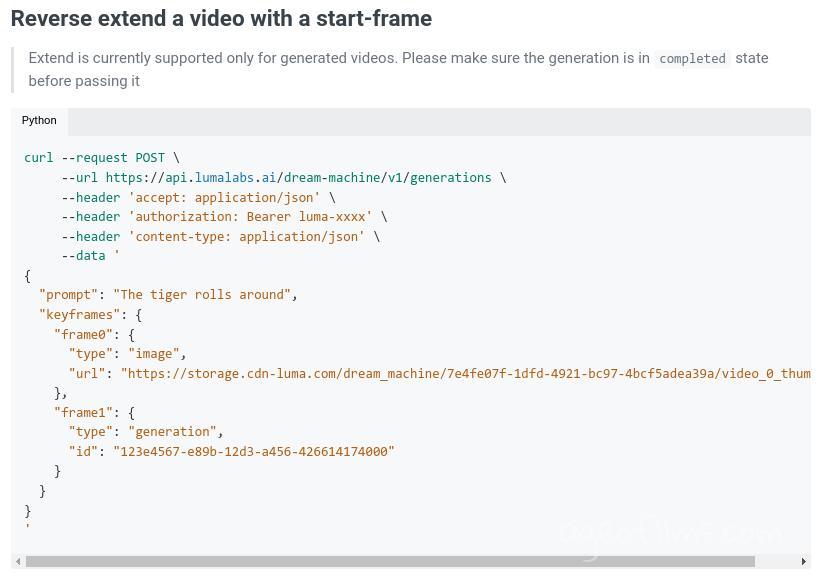

Luma’s Dream Machine API

Just hours after Runway, Luma launched its own Dream Machine API. Developers can now use it to build video-generating apps outside the Luma AI website. Hugging Face already has a demo up, showing what’s possible with this model.

Before, Dream Machine was only accessible through Luma’s website. Now, anyone from individual developers to large teams can start experimenting. Luma’s goal is to make visual storytelling easier, allowing more creators to bring their ideas to life.

You can find the documentation here: https://docs.lumalabs.ai/docs/api.

Hugging Face have a demo version https://huggingface.co/spaces/akhaliq/dream-machine

Luma API Pricing is $0.0032 / 1M generated pixels

That is $0.0032 per frame or about $0.4 for 5s 24fps video at 1280×720p

That'd come to ~ $250 for an hour long video, but of course not every generation will be usable, so expect to double or even tripple that, hey, for some demanding forks it could probably be even a bigger multiplier, but these kind of prices are still quite affordable.

Amit Jain, co-founder and CEO of Luma AI, said in a statement published as part of a press release, saying: “Our creative intelligence is now available to developers and builders around the world. Through Luma’s research and engineering, we aim to bring about the age of abundance in visual exploration and creation so more ideas can be tried, better narratives can be built, and diverse stories can be told by those who never could before.”

Both Runway and Luma’s APIs come shortly after Adobe previewed its Firefly Video AI, which focuses on public-domain data. However, Firefly Video is still on a waitlist and isn’t available for developers to build apps yet.

Published: Sep 21, 2024 at 12:27 PM

Related Posts

AI Film Festival 2025: Submissions Open Now

12 Feb 2025

Runway ML Updates: Act-One and Frames Model

7 Dec 2024