Genmo Launches Mochi 1: A New Open-Source AI Video

Wow. We have a new open source ai video generator!

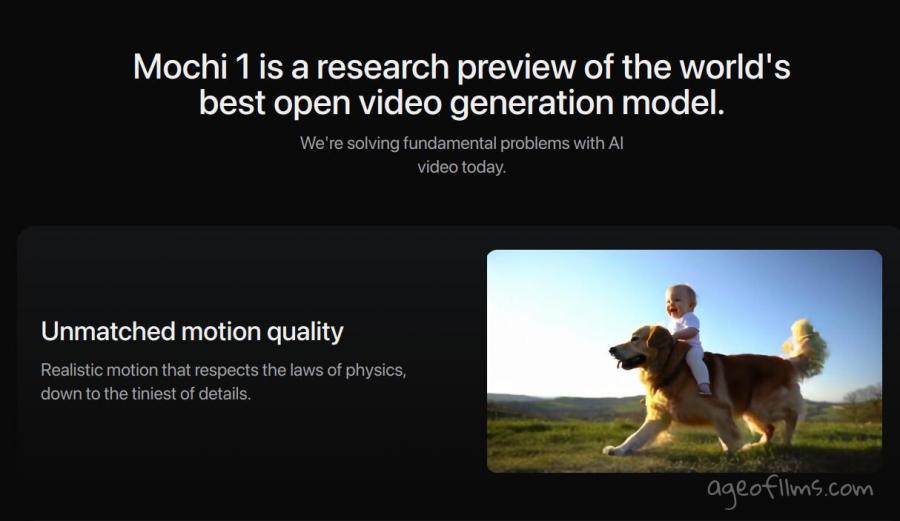

AI video startup Genmo has unveiled Mochi 1, a new open-source model designed for high-quality video generation from text prompts.

What sets Mochi 1 apart is its free and open access under the permissive Apache 2.0 license, while competitors often charge premium fees.

The model’s code and weights can be downloaded for free on Hugging Face. However, it requires a hefty setup of at least 4 Nvidia H100 GPUs to run locally. For those without that kind of hardware, Genmo offers a free playground where users can test Mochi 1's video capabilities at 480p resolution. A higher-definition version, Mochi 1 HD, is expected later this year.

Mochi 1 offers strong motion quality and prompt adherence, producing smooth, coherent videos at 30 frames per second. It accurately captures detailed actions and settings based on user prompts. While the current version outputs at 480p and has some minor distortions with extreme motion, the model shows significant promise for the future of open-source video generation.

Genmo also raised $28.4 million in Series A funding, with investors including NEA, The House Fund, and others. Their mission is to push the boundaries of artificial general intelligence, with Mochi 1 being a major step toward that goal.

You can explore Mochi 1’s capabilities by trying it for free at genmo.ai/play.

Quick Mochi Video Test

From my tests so far...

Once I've shortened my prompt to "A disorienting angled shot with a tilted horizon camera tilted to the left captures the chaos of a zombie apocalypse. " it started rendering. The result done an upward tilt rather than angled but that's expected, none of the other models seem to like doing Dutch angle. The lighting came out too dark also so won't share it.

Next prompt I made sure to be bright. And what was interesting to find was the real-time preview of the generation cooking:

As I said, nobody likes doing Dutch angle, but the model's choice of replacement camera angle and movement was good: it started low and carried on with a tracking shot from behind the woman.

Despite some obvious lacking of details, you can see the anatomy, the movements and even fabrics dynamics are very good. IMO, decent quality for an open-source model. Wish I had the hardware to run it.

Enter Quantized Mochi

Updated on 25th of October.

Open-source AI video generators are getting smaller and easier to run locally. The first community ComfyUI plugin is now live, running a quantized version on a 4090 GPU with less than 20GB of VRAM.

This was reposted by Genmo X, and originally shared by Jukka Seppänen (@Kijaidesign). He mentioned that shorter clips are fairly quick to generate, with around 49 frames taking 5 minutes. However, longer clips of 163 frames took about 20 minutes, which is slower. There’s room for more speed improvements, though.

Interestingly, you can start seeing the motion with as few as 4 steps, which could help speed up finding the right prompt and seed.

Another user commented that they could run it on a 4070 with 12GB VRAM, and using the t5_v1.1-xxl GGUF model, they generated 49 frames at 720x480 resolution in 13 minutes.

Get the quantized Mochi version here https://github.com/kijai/ComfyUI-MochiWrapper

For more open souce ai video models, see https://ageofllms.com/ai-news/ai-fun/pyramid-flow-opensource-ai-video

Last modified 25 October 2024 at 11:23

Published: Oct 23, 2024 at 3:48 PM

Related Posts

AI Film Festival 2025: Submissions Open Now

12 Feb 2025