Alibaba's Open-Source QwQ-32B Preview AI Model Challenges ChatGPT

Alibaba’s latest AI innovation reportedly rivals OpenAI, showcasing superior reasoning, programming, and mathematical abilities.

Alibaba has unveiled the QwQ-32B Preview, a cutting-edge AI model designed to challenge OpenAI’s offerings like ChatGPT. Combining robust architecture, advanced problem-solving, and open-source accessibility, it sets a high bar for AI technology.

Noteworthy Features

Advanced Framework

QwQ-32B Preview employs a 64-layer design with specialized attention mechanisms, including 40 heads for Q and 8 for KV. With a 32,768-token context length, it excels in managing complex, detailed prompts.

Exceptional Performance Metrics

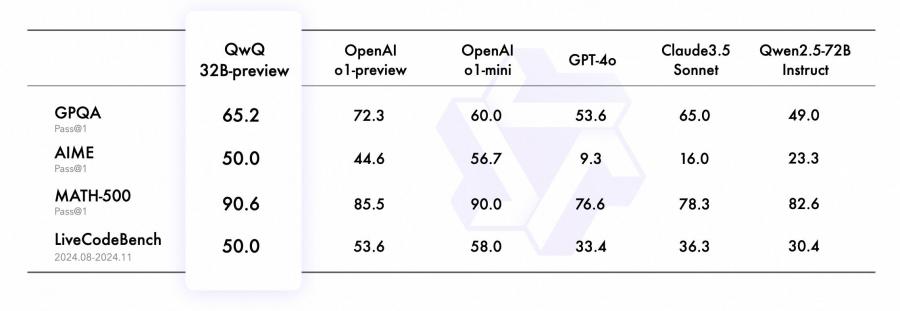

The model demonstrates outstanding results across key benchmarks:

- GPQA: 65.2%, reflecting graduate-level scientific reasoning.

- AIME: 50.0%, showcasing advanced math skills.

- MATH-500: 90.6%, demonstrating comprehensive mathematical expertise.

- LiveCodeBench: 50.0%, validating programming abilities in real-world contexts.

With 32.5 billion parameters, QwQ-32B Preview surpasses OpenAI’s o1-preview and o1-mini models in select benchmarks. Its capacity to process prompts as long as 32,000 words highlights its potential for tackling intricate technical challenges.

Thoughtful Reasoning Enhances Accuracy

By simulating a reflective approach to problem-solving, QwQ-32B Preview improves its understanding and precision. This makes it particularly adept at solving puzzles, advanced math problems, and programming tasks.

Strengths and Drawbacks

While capable of self-checking its outputs to minimize errors, QwQ-32B Preview occasionally switches languages unexpectedly and struggles with tasks requiring everyday logic or common sense. Its extended processing time is another limitation, though its results often justify the wait.

Open-Source Access

Released under an Apache 2.0 license, the model is open for commercial use. However, only specific components are available so far, limiting its full replication or in-depth analysis.

GitHUB https://github.com/QwenLM/Qwen2.5

Demo https://huggingface.co/spaces/Qwen/QwQ-32B-preview

Ollama https://ollama.com/library/qwq

Community Feedback on QwQ

Opinions on the QwQ model on Reddit are mixed but lean positive:

- Strengths: Excellent reasoning and problem-solving, engaging for role-play, and praised for being open-source.

- Weaknesses: Often verbose, overanalyzes simple tasks, and occasionally outputs irrelevant or unexpected text (e.g., Chinese).

- Comparisons: Some prefer its reasoning over models like DeepSeek or Llama3, though others find it too chatty or inconsistent.

- Usage: Works well on consumer GPUs but requires careful setup.

- General Sentiment: Intriguing and promising, but quirky and sometimes frustrating.

While some preferred its reasoning over models like DeepSeek or Llama3, others found it too chatty or inconsistent.

X (Twitter) Buzz

- @WolframRvnwlf praised QwQ’s performance, stating it surpasses models like Llama 405B and Mistral 123B in specific tests.

- @victormustar shared his experience with the model, stating:

"Still doubting QwQ-32B Preview? I tested it against some really challenging reasoning prompts, and the results are amazing 🤯. In short: it's the smartest open model ever released." - @dani_avila7 demonstrated how QwQ integrates with VSCode via CodeGPT. Hardware recommendations included at least an M1 chip with

Last modified 30 November 2024 at 15:55

Published: Nov 30, 2024 at 2:45 PM