Gemini 2.0 Flash: Google's Next Big Leap

Google’s Gemini 2.0 Flash has arrived, and Gemini 2.0 Flash’s multimodal version will roll out for everyone in January 2025.

With the new upgrade, you will be able to edit and create images straight from the model, mixing text and visuals effortlessly. It’s built for storytelling, design, and all kinds of creative work.

Key Features

1. Chat-Powered Image Editing

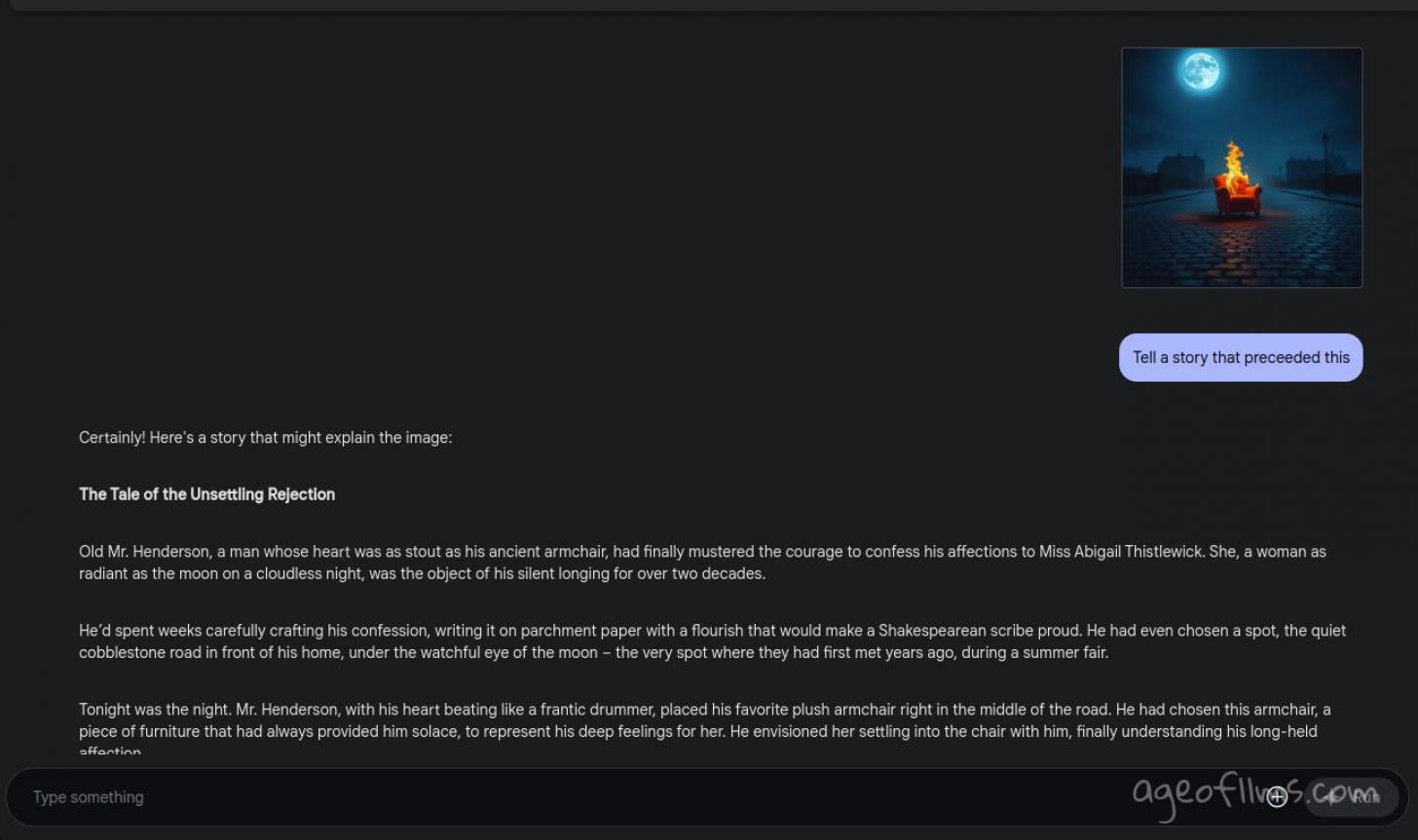

You can talk to Gemini to tweak your images over multiple steps. Upload a picture and ask for changes like adjusting colors or turning a regular car into a convertible. Or, let's see what else it can do with an image...

2. Smarter Edits

The tool gets what’s happening in your images. It can fine-tune textures, shift object placements, or smooth out tricky details like a pro.

3. Text + Image in One Response

It delivers outputs that combine text and images, like illustrated recipes or labeled designs, all in one go.

4. Mix, Match, and Create

Take multiple images, add text instructions, and mash them into something new. Think stitching a cat’s face onto a pillow.

5. Play with Your Images

Explore objects from fresh angles and interact with them in ways you’ve never done before.

6. Direct Changes in Real-Time

Guide the elements of your image through advanced transformations with simple instructions.

7. Faster and More Efficient

Gemini 2.0 Flash is super quick, making it great for tasks that need instant results.

8. Built-In Image and Speech Creation

For the first time, Gemini lets you create images and even produce speech directly from the model—opening up tons of new possibilities.

9. Powered by AI Agents

Google’s also working on AI agents like Project Astra and Project Mariner, powered by Gemini 2.0, to help with tasks like browsing the web or handling live info.

Try it here https://aistudio.google.com/prompts/new_chat

Redditors Buzz Key Summary

1. Google vs. OpenAI Competition

- Many commenters feel that Google is strategically overpowering OpenAI with superior computational resources and TPU clusters, which are not accessible to competitors reliant on Nvidia hardware.

- Google's experimental releases (like Gemini) are perceived as a direct challenge to OpenAI’s dominance, sparking fierce competition.

2. Performance of Gemini Models

- Gemini 1206 Experimental:

- Mixed reviews. Some call it the best for coding and STEM knowledge, while others note persistent issues like hallucinations and instruction-following errors.

- It's favored for translations and technical tasks but criticized for struggles with multitasking and context-switching.

- Concerns about naming confusion (e.g., "Advanced," "Flash," "Experimental") and clarity around version releases were raised repeatedly.

3. General Industry Observations

- There’s acknowledgment that compute power is critical and Google’s scale gives them an edge, but commenters also highlight the importance of open-source models (e.g., Meta’s Llama, Alibaba’s Qwen, Mistral) to avoid centralized control.

- Speculations include OpenAI delaying GPT-4.5 to counter Google's advancements, with Anthropic’s Sonnet models also seen as competitive.

4. Technical Feedback on Gemini

- Issues with specific instruction handling, context switching, and hallucinations are noted but improving with newer versions.

- Code generation abilities are highlighted, with some calling it superior to ChatGPT and Claude for coding tasks, while others found opposite results.

5. Benchmarks and Usability

- Frustration over inconsistent benchmarks and claims that models are becoming specialized for certain tasks.

- Gemini’s 1M token context length and efficient refactoring capabilities are widely praised.

- Suggestions for better usability and more accessible naming conventions were frequent.

Source: [ Reddit ]

Last modified 19 December 2024 at 19:59

Published: Dec 16, 2024 at 1:24 PM

Related Posts

Meet Gemini CLI. AI that lives in your terminal

26 Jun 2025