Microsoft Releases Phi-4: A 14B Parameter Open-Source AI Model

Microsoft has officially released Phi-4, a 14-billion parameter language model, as a fully open-source project. Available on Hugging Face under the MIT License, the model includes downloadable weights, making it accessible for research and commercial use.

Microsoft AI engineer Shital Shah shared the news on X, highlighting the excitement around the release:

“We’ve been amazed by the response to the phi-4 release… Well, wait no more. We are releasing the official phi-4 model on Hugging Face! With MIT license!!”

What Makes Phi-4 Special?

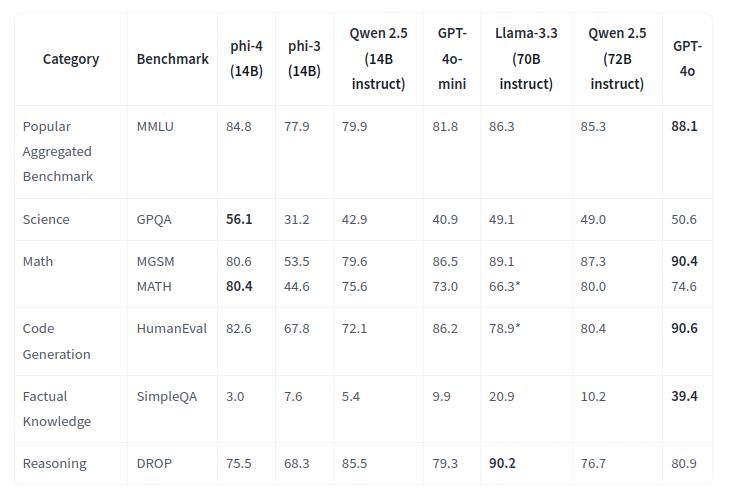

Phi-4 stands out for its focus on reasoning and efficiency. Despite being smaller than many rivals, it outperforms models like Google’s Gemini Pro in tasks like math and multitask language understanding. Key highlights include:

- Scoring over 80% in tough benchmarks like MATH and MGSM.

- Exceptional performance in math-heavy fields like finance and engineering.

- Generating functional code with impressive accuracy on HumanEval.

Its streamlined 14-billion-parameter design ensures high performance without the massive computational demands of larger models. Trained on 9.8 trillion tokens, its data includes curated public content, math-focused synthetic datasets, and high-quality academic texts. While optimized for English, it also supports limited multilingual tasks.

Why the Open-Source Release Matters

With its release on Hugging Face, businesses and researchers can now fine-tune and deploy Phi-4 for their needs. The MIT License ensures freedom for commercial applications, encouraging innovation and accessibility.

Microsoft’s move aligns with the growing trend of open-source AI, making cutting-edge tools available beyond proprietary platforms. Developers are already praising Phi-4’s ability to deliver high-quality outputs without requiring extensive hardware.

Efficiency Over Scale

Phi-4 challenges the idea that bigger is always better. By focusing on efficient design, it proves that smaller models can achieve powerful results in math and reasoning tasks, reducing both costs and energy use.

Community Reactions to Phi-4

-

Licensing & Release:

- MIT license is a big win over the restrictive Microsoft license.

- Official release on Hugging Face appreciated; earlier access was limited or unofficial.

-

Performance:

- Excels in reasoning and logic tasks.

- Factual knowledge scores dropped due to reduced hallucinations.

- Poor for creative writing and some knowledge-heavy tasks.

-

Use Cases:

- Great for programming, logical reasoning, and multilingual tasks.

- Not good for creative or context-heavy work.

-

Benchmarks:

- Some users think it’s tuned to look good in tests rather than being useful.

- Mixed reviews: good for some tasks, underwhelming for others.

-

Model Size:

- At 14B parameters, it’s too large for many with limited VRAM.

- Users want smaller, more efficient versions.

-

Community Impact:

- Open-source release helps research on small models.

- Highlights potential of focused reasoning but lacks factual depth.

-

General Sentiment:

- Useful for specific tasks, but limitations frustrate users.

- Mixed feelings on Microsoft’s delay and real-world practicality.

- [ Source: Reddit ]

Published: Jan 9, 2025 at 11:43 AM